Melbourne University Law Review

|

Home

| Databases

| WorldLII

| Search

| Feedback

Melbourne University Law Review |

|

ALYSIA BLACKHAM[*]

Algorithmic discrimination represents a growing challenge for equality law. While the elimination of discrimination in employment and occupation is a fundamental obligation of International Labour Organization members, Australian equality law remains ill-adapted to respond to emerging risks. This article argues that the automated application of machine learning algorithms presents five critical challenges to equality law related to the scale of data used; their speed and scale of application; lack of transparency; growth in employer control; and the complex supply chain associated with digital technologies. Considering principles from privacy and data protection law, third-party and accessorial liability, and collective solutions, this article puts forward reforms and suggestions to better set the framework for accountability for algorithmic discrimination in the workplace.

CONTENTS

Digital inequalities at work are pervasive yet difficult to challenge. Employers are increasingly using algorithmic tools in recruitment, work allocation, performance management, employee monitoring and dismissal.[1] According to a survey conducted by the Society for Human Resource Management, nearly one in four companies in the United States (‘US’) use artificial intelligence (‘AI’) in some form for human resource management.[2] Of those surveyed who do not use automation for such processes, one in five organisations plan to either use or increase their use of such AI tools for performance management over the next five years.[3]

The elimination of discrimination in employment and occupation is a fundamental obligation of International Labour Organization (‘ILO’) members, and is included in the ILO Declaration on Fundamental Principles and Rights at Work.[4] This obligation invariably extends to the digital sphere. It is critical, then, to create a meaningful framework for accountability for these algorithmic tools. At present, though, it is unclear who is responsible for monitoring the risks of algorithmic decision-making at work: is it the technology companies who develop and market these algorithmic products? The employers using algorithmic tools? Or the individual workers who might experience inequality as a result of algorithmic decision-making? Or, indeed, all three?

This article considers how we might create a framework for accountability for digital inequality, specifically concerning the use of algorithmic tools in the workplace that disadvantage groups of workers. In Part II, I consider how algorithms and algorithmic management might be deployed in the workplace, and the way this might address or exacerbate inequality at work. I argue that the automated application of machine learning (‘ML’) algorithms in the workplace presents five critical challenges to equality law[5] related to: the scale of data used; their speed and scale of application; lack of transparency; growth in employer control; and the complex supply chain associated with digital technologies. In Part III, I consider principles that emerge from privacy and data protection law, third-party and accessorial liability, and collective solutions to reframe the operation of equality law to respond to these challenges. Focusing on transparency, third-party and accessorial liability, and supply chain regulation, I draw on comparative doctrinal examples from the European Union (‘EU’) General Data Protection Regulation (‘GDPR’),[6] the Australian Privacy Principles (‘APP’)[7] and Fair Work Act 2009 (Cth) (‘Fair Work Act’), and collectively negotiated solutions to identify possible paths forward for equality law. This analysis adopts comparative doctrinal methods, reflecting what Örücü describes as a ‘problem-solving’ or sociological approach to comparative law, examining how different legal systems have responded to similar problems in contrasting ways.[8] The fact that these jurisdictions are facing a similar problem warrants the comparison;[9] differences in national context increase the potential for mutual learning.[10] The GDPR is seen as setting the standard or benchmark for global data protection regulation:[11] it is therefore considered here as an important comparator to Australian provisions.

Drawing on these principles, I argue that there is a need to develop a meaningful accountability framework for discrimination effected by algorithms and automated processing, with differentiated responsibilities for algorithm developers, data processors and employers. While discrimination law — either via claims of direct or indirect discrimination — might be adequately framed to accommodate algorithmic discrimination,[12] I argue for a need to reframe equality law around proactive positive equality duties that better respond to the risks of algorithmic management. This represents a critical and innovative contribution to Australian legal scholarship, which has rarely considered the implications of technological and algorithmic tools for equality law.[13] Given the critical differences between Australian, US and EU equality law,[14] there is a clear need for jurisdiction-specific consideration of these issues.

The use of algorithms and algorithmic management has significant implications for workplace equality.[15] Algorithms are a set of instructions telling a computer how to process certain pieces of information (input data) to create some sort of useful result (output).[16] Non-learning based algorithms are designed by humans: a human manually formulates the steps required to generate the outputs based on the inputs.[17] By contrast, ML algorithms utilise a ‘model’ that is trained to identify the relevant features in its input data to create its output.[18] ML often uses ‘labelled’ data sets — that have been categorised by humans — to ‘learn’ what features are relevant.[19] However, there is no explicit human involvement in the reasoning behind decisions.[20] For example, an ML algorithm, given a collection of labelled images, could determine if a new image was of a cat, but we would not necessarily know why it had arrived at that decision.

In the workplace, then, algorithmic management is characterised by: the gathering of significant amounts of data about individual employees and their work (as data ‘inputs’); the use of digital tools to sort, analyse and process this data (this might include the use of algorithms and ML); and the use of automated insights to inform, direct or determine organisational decisions.[21] Automated tools, AI and algorithms may be used across the employment life cycle, from recruitment, to managing performance and development,

to termination.[22]

In recruitment, AI might be used to identify the best candidates for a role based on publicly available data, like social media profiles, or to screen and assess candidates during recruitment. Chatbots can be used to engage with candidates during recruitment and automated tools can be used to screen résumés, rank candidates, and even determine salary and job offers.[23] In relation to training, performance and development, chatbots might be used to help to look up information, such as company policies or benefits, or to provide recommendations for learning and training to employees.[24] Automated tools might be used to track workers’ locations, speed, fitness, productivity and work accuracy;[25] to allocate shifts; to facilitate ‘management by algorithm’ or ‘electronic performance monitoring’;[26] to compile worker ratings (as in ‘gig’ work on Uber or Airtasker); or to allocate work tasks.[27] Those who underperform might receive a warning or have their position terminated.[28] These automated systems and AI processes are being rolled out across both blue- and white-collar roles, affecting both service and professional jobs alike.[29]

At their best, the use of algorithms at work could save employers time and cost, increase certainty, overcome bias and human error, lead to more objective and fairer decision-making, and even advance equality by identifying employers’ blind spots and discriminatory structures.[30] As Estlund argues, ‘[w]here automation is feasible and cost-effective, it offers the ultimate exit from the costs, risks, and hassles of employing people, including those that stem from the law of work’, including discrimination law.[31] Algorithms are seen as less ‘risky’ than human labour: humans are biased and discriminate against others, whereas algorithms are ‘objective’ and ‘unbiased’, reflecting the ‘double-edged nature of automation for workers’.[32] Thus, while the use of algorithms might save time and cost, this might be achieved at the expense of human jobs.[33] As Estlund argues:

Automation is thus part of a larger menu of options by which those who own or manage capital seek to maximize their returns. Those who supply the robots and the algorithms that replace human labor and destroy jobs are responding to demand from firms seeking more profitable ways to produce other goods and services. ... [I]f robots or algorithms can supply those inputs even more quickly, more reliably, more cheaply, or with less risk, then lead firms will turn to them instead of human labor.[34]

Automation might eliminate some of the riskiest jobs, or riskiest aspects of jobs, leading to better jobs and better conditions.[35] If the worst jobs are eliminated, and ‘the displaced workers end up with better jobs, this ... may show innovation and creative destruction at their most virtuous’.[36] De Stefano argues, however, that this quantitative focus on job loss is too simplistic: automation and algorithms will not just eliminate ‘bad’ jobs, but may also undermine the quality of existing jobs, including through workplace control, surveillance and monitoring.[37] Requiring humans to interact with automated processes in the workplace can undermine human dignity, dehumanise human workers and increase workplace alienation.[38] A focus

on job loss also ignores the labour-intensive nature of many algorithms

and forms of automation. Many ML algorithms are manually trained by

humans and it is labour-intensive to implement and navigate automated

workplace systems.[39]

At their worst, then, algorithmic management tools could be fundamental drivers of inequality at work. The oft-cited example in this space is Amazon’s attempted development of an algorithmic recruitment tool. The tool reviewed applicants’ résumés to determine which applicants were most likely to be successful recruits.[40] Applicants were graded from one to five stars.[41] The tool was ultimately scrapped, however, because it systematically discriminated against women applicants for software development and technical jobs.[42] The tool had been trained on résumés (and, presumably, hiring outcomes) from 10 years of job applicants; men are significantly over-represented in the field and were therefore significantly over-represented in the pool of résumés and successful applicants.[43] Thus, the tool ‘learnt’ that men applicants were to be preferred. The tool therefore reportedly penalised applications with the word ‘women’s’ or the name of all-women’s colleges.[44] Further attempts to develop a tool that could crawl the web to identify candidates worth recruiting, trained on 50,000 terms that appeared in past candidates’ résumés, again resulted in a tool that favoured terms that appeared in men’s résumés, such as ‘executed’ and ‘captured’.[45] Despite these well-publicised problems, the internet is awash with companies attempting to sell employers automated recruitment and screening tools. As Kim summarises:

Data mining models are ... far from neutral. Choices are made at every step of the process — selecting the target variable, choosing the training data, labeling cases, determining which variables to include or exclude — and each of these choices may introduce bias along the lines of race, sex, or other protected characteristics. Because of the atheoretical nature of data mining, once these biases are introduced, they may be difficult to detect and eliminate. Mere correlation may be mistaken for causation, and the true basis for employer decision-making is obscured. Moreover, these biases may persist or even worsen over time because of limited opportunities for error detection and the operation of feedback effects. For all of these reasons, identifying and addressing the potential harms that biased algorithms cause should be matters of policy concern.[46]

Thus, the EU Proposal for a Regulation of the European Parliament and of the Council Laying Down Harmonised Rules on Artificial Intelligence (Artificial Intelligence Act) and Amending Certain Union Legislative Acts sees the use of AI systems at work as high risk, in part due to their potential discriminatory impacts.[47]

‘Discrimination’, in this context, involves unfavourable treatment because of, or which disadvantages those with, a protected characteristic, such as age, gender or sex, sexuality, or ethnicity.[48] The automated application of ML algorithms presents five critical challenges to equality law. First, deploying ML algorithms in the workplace requires a significant body of data to develop and train them. The sheer scale of data held and used by ML algorithms distinguishes the situation now from that in the past[49] and raises distinct issues of privacy, particularly around the collection of sensitive personal information. At its best, this data could be used to reveal and proactively address discrimination at work;[50] this scale of data analysis may make the identification of discrimination and inequality in the workplace more readily possible.[51] More likely, though, these automated systems will replicate and reinforce discrimination seen in other labour market contexts, including the gender pay gap,[52] as can be seen in the Amazon example above. Algorithmic discrimination is likely to disproportionately affect those who are already most impacted by discrimination, including Indigenous and First Nations peoples.[53] As the European Economic and Social Committee has argued:

[T]he development of AI is currently taking place within a homogenous environment principally consisting of young, white men, with the result that (whether intentionally or unintentionally) cultural and gender disparities are being embedded in AI, among other things because AI systems learn from training data. This data should be accurate and of good quality, diverse, sufficiently detailed and unbiased. There is a general tendency to believe that data is by definition objective; however, this is a misconception. Data is easy to manipulate, may be biased, may reflect cultural, gender and other prejudices and preferences and may contain errors.[54]

Thus, data (and systems that depend on that data) can be both equality enhancing and equality detracting, reflecting the inherent ambivalence or ambiguity of digital technologies for equality at work.

Second, automated applications of algorithms are distinct in their scale and speed: automated processes can have significant impacts for organisations and individuals almost immediately, particularly in cases where there is no human oversight of automated decisions.[55] This scale and speed may mean that it is some time before the discriminatory impacts of automated processes are discerned, by which time significant repercussions would have already been effected.

Third, equality law is challenged by the lack of transparency around algorithms. To a non-technical audience, algorithms can often be opaque and hard to understand (a ‘black box’).[56] ML exacerbates the issue further, making it difficult for even the model designer to show or explain why the model gives a particular result. A lack of transparency means that it is difficult — if not impossible — to evaluate the extent to which discrimination is built into ML algorithms,[57] and even more complex for individuals to challenge discriminatory impacts. It also makes it difficult for employers or users of AI to understand how the system works or to identify problems before they occur. The people and organisations deploying algorithms often fail to understand the limits and confines of algorithms or where predictions are ‘coming’ from. As Köchling and Wehner argue:

[K]nowledge about the potential downsides of algorithmic decision-making is still in its infancy in the field of [human resource management] despite its importance due to increased digitization and automation in [human resource management]. ... From a practical point of view, it is problematic if large and well-known companies implement algorithms without being aware of the possible pitfalls and negative consequences.[58]

AI could then become a ‘cloak’ for biased practices, lending an air of objectivity to practices that simply replicate existing discrimination,[59] effectively ‘augment[ing] discriminatory practices’.[60]

Fourth, this lack of transparency could increase employers’ control over the workplace and its participants. How algorithmic tools are deployed in the workplace is rarely transparent. For example, companies do not share how ratings and feedback are assessed or used to create worker ‘ratings’.[61] This lack of transparency can increase the hierarchical control and power disparities that characterise the employment relationship.[62] Indeed, non-transparent monitoring can itself become a form of control: if employees are uncertain as to how algorithms work or what data they are drawing on, this can lead to work intensification for fear of ‘offending’ or negatively impacting the algorithm. The possibility of continuous individualised monitoring therefore encourages work intensification,[63] and — coupled with a lack of transparency — poses what Gregory describes as an ‘epistemic ris[k]’: a lack of transparency in how work is allocated ‘confounds [workers’] sense of

self-employment and agency’.[64] This then increases the risks of gig work, ‘creating conditions where workers are fundamentally unsure about the rules

of work’.[65]

Adams-Prassl therefore argues in the context of the gig economy that ‘the real point of rating algorithms ... [i]s to exercise employer control in myriad ways’.[66] Across all workplaces, ‘management automation enables the exercise of hitherto impossibly granular control over every aspect of the working day’.[67] For example,

[t]he algorithmic boss can hover over each worker like a modern-day Panoptes, the all-seeing watchman of Greek mythology: from vetting potential entrants and assigning tasks, to controlling how work is done and remunerated, and sanctioning unsatisfactory performance — often without any transparency or accountability.[68]

Control can be exercised directly and indirectly, through instructions and directives, but also through incentives, ‘nudge[s]’ and other forms of

‘soft control’.[69]

These risks of automated processes and increased employer control are amplified in cases of insecure work and at-will employment, particularly in scenarios where work can be terminated without a reason.[70] In Australia, this is likely to affect casual employees in particular, who have no guarantee of ongoing work. Indeed, Berg argues that technology is often used — or, rather, the human users of technology often use it — to make work more precarious, invisible, insecure and of lower quality.[71]

Fifth, algorithmic management — particularly that involving ML — engages a complex supply chain, as the gathering of training and testing data may be separate from the development of the algorithm itself, and separate again from the employer who deploys such technology. This complex supply chain means, for example, that training and testing data may be biased, selective, old or out of date, yet this is not evident to the algorithm developer,[72] or that employers might use a system and its algorithms with inputs outside the scope for which it was designed or trained.

It is critical, then, to create a meaningful framework for accountability for these algorithmic tools where discrimination occurs. Rather than seeing these developments as the inevitable price of technological progress, we must think creatively about how regulation might mitigate these risks for job quality and equality.[73] As Berg argues, ‘nothing about these trends is inevitable, as it does not reflect the technology per se, but how it is used, and the lack of governance of the new forms of employment that have emerged’.[74]

Discrimination and bias in algorithms and automated processes can emerge through three key issues. First, it might originate from poor quality or inappropriate input data for training or testing (‘garbage in, garbage out’), including through the use of biased, historical or out-of-date data, or data which under- or over-represents certain groups.[75] Second, it might reflect technical bias in the algorithm itself, derived from technical or human constraints.[76] Third, there might be emergent or user bias in how the algorithm is applied or deployed, either due to new societal knowledge, a mismatch between how the algorithm was designed and how it is ultimately deployed,[77] or poor quality organisational data in applying the algorithm.[78] Thus, any framework for accountability must address the quality of: data used (both in the test/train stage and in the deployment of algorithms); algorithms themselves, in addressing appropriate questions and issues; and the application of algorithms in and to workplaces.

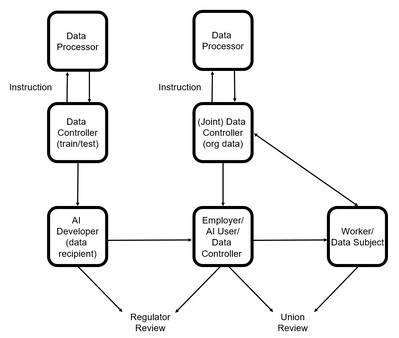

Accountability therefore can and should attach to different roles in different ways. Figure 1 illustrates the various roles and parties that might be involved in developing and deploying algorithmic tools in the workplace. These different roles are recognised, in part, by the GDPR, which differentiates between data controllers (who determine the why and how of data analysis) and data processors (who process data under the instruction of a data controller).[79] Equally, though, we must distinguish between the roles and accountabilities of AI developers and AI deployers (in this case, employers). Figure 1 therefore also illustrates the ways in which accountability and review might be implemented in this context.

Figure 1: Differentiated Roles in Deploying Algorithms in the Workplace

At present, though, it is unclear who is responsible for monitoring the risks of algorithmic decision-making: is it the technology companies who develop and market these algorithmic products? The employers using algorithmic tools? The companies gathering or processing data for testing and training, or the workplace application of algorithmic tools? Unions, collective groups of workers and/or regulators? Or the individual workers who might experience inequality as a result of algorithmic decision-making? Or, indeed, all of these groups? As Berg argues, it is a fallacy to believe we are shifting ‘responsibility’ to the technology itself:

Technology is not an independent force — it is developed by humans who make design decisions that impact the experiences, and lives, of its users. There is no such thing as a neutral technology — the choices made about how it works and how it is used are political. If the discretion of managers on scheduling shifts is given to the ‘algorithm,’ then it is not that the discretion has been eliminated but rather that it has been shifted to the data scientists and engineers who built the system.[80]

At the same time, though, the data scientists and engineers who built the system or algorithm would likely disclaim any responsibility for those decisions; rather, they would see the product as being deployed by the employer, with responsibility at the feet of the employer for any decisions made.[81] There is a risk, then, of an accountability vacuum, in perception if not in practice. If no individual or organisation sees themselves as accountable for automated decisions, there is less likely to be critical review of automated processes. For Adams-Prassl, this creates a ‘control/accountability paradox’, where control is concentrated in the employer, but accountability is diffuse and dispersed.[82] The Australian Human Rights Commission has therefore recommended the introduction of a rebuttable presumption that liability for decisions made by algorithmic tools lies with the person responsible for making the decision, regardless of how that decision is made.[83] While this might ensure at least some accountability, it does not reflect the complex division of roles and responsibilities in the AI development process.

That said, algorithms and automated processes are not the only labour law developments that obscure legal responsibility and potentially undermine employment rights. Indeed, this has already been seen in the fissuring of the workplace[84] and the use of sham contracting.[85] Adams-Prassl argues, though, that these are legal mechanisms, which can be dealt with via legal tools,[86] and are therefore distinct from the problems arising from algorithmic control:

[A]lgorithmic management does not rely on legal mechanisms to obfuscate control in order to evade responsibility — rather, diffuse and potentially inexplicable control mechanisms are inherent in the use of increasingly sophisticated rating systems and algorithms.[87]

In this part, then, I consider existing regulatory tools that might create a framework for accountability for digital inequality, particularly concerning the use of algorithmic tools in the workplace. Focusing on transparency, third-party and accessorial liability, and supply chain regulation, comparative doctrinal examples from the GDPR, the APP and Fair Work Act, and collectively negotiated solutions, I seek to identify possible paths forward for equality law.

Privacy and data protection law is the area of regulation that has engaged most directly with these issues to date. While algorithmic discrimination at work is not just an issue for privacy law, privacy law can nonetheless play an important role in the regulatory framework. Australian privacy law is structured around both state and federal legislation. Focusing on federal law, the Privacy Act 1988 (Cth) (‘Privacy Act’) establishes 13 Australian Privacy Principles,[88] with which ‘APP entities’ (public sector agencies and organisations) must comply.[89] Critically in the employment context, however, there is an exemption for employee records: so long as an act or practice of an employer is ‘directly related to’ an employee record of a current or former employment relationship, the APP do not apply.[90] This exemption is discussed further below; it has critical implications, though, for the limits (if any) on how employers handle and deploy employee data. Arguably, with this exemption, employee data (once collected) is effectively exempt from regulation under privacy law. This is a critical gap in the privacy law framework. The Privacy Act is also largely targeted at federal public entities and larger organisations (specifically those with an annual turnover exceeding $3 million); small businesses are exempt.[91] Smaller businesses and state and territory entities are likely better covered by privacy laws in the states and territories.

The APP were introduced by the Privacy Amendment (Enhancing Privacy Protection) Act 2012 (Cth);[92] there have been few substantive amendments to the APP in the decade since.[93] In 2019, the Digital Platforms Inquiry conducted by the Australian Competition and Consumer Commission (‘ACCC’) raised concerns ‘that the existing regulatory frameworks for the collection and use of data have not held up well to the challenges of digitalisation’, including the growth of digital platforms and the extensive collection of individual data.[94] While the ACCC’s report was primarily focused on protecting consumers — not workers — the scope of the subsequent review of the Privacy Act has been broader.[95] Indeed, the federal Office of the Australian Information Commissioner (‘OAIC’), in its submissions to the review, called for: the removal of the small business exemption;[96] the removal of the employee records exemption;[97] new provisions relating to automated decision-making;[98] and the enhancement

of accountability measures, including those relating to data controllers and

data processors.[99]

Many of the OAIC’s recommendations draw and build on the GDPR. While scholars have doubted the significance of the GDPR for enhancing employee rights (particularly given the existing right to privacy under art 8 of the European Convention on Human Rights (‘ECHR’)),[100] the GDPR still offers significant advances over the APP, particularly given the absence of a common law right to privacy or federal human rights instrument in Australia.[101] As the standard or benchmark for global data protection regulation, the GDPR offers important insights into how the APP might

be strengthened.[102]

While acknowledging the potential limits of privacy law, then, there are six thematic ideas or principles originating from privacy law (particularly as it is framed in the GDPR) that can help to build our framework for addressing algorithmic discrimination at work.[103]

First, under privacy law, algorithms should be transparent in their operation, so the data they are based on, and their outcome, are understandable, communicable and able to be effectively challenged.[104] Thus, criteria (for recruitment, performance review or termination) should be clearly communicated and workers should be informed as to how and for what purpose their data is being collected.

This focus on transparency is evident in what is sometimes called the ‘right to explanation’ under the GDPR.[105] Articles 13(1) and 14(1) of the GDPR require data controllers to provide data subjects with a range of information when personal data is collected. Those collecting data must disclose, for example: their identity and contact details (and, if relevant, that of their representative); the purpose and legal basis of the data collection and processing; any additional potential recipients of the data; and, where applicable, the fact that the controller intends to transfer personal data to a third country or international organisation, and the safeguards in place for such transfer. Similar disclosure must be made in the Australian context.[106]

Under the GDPR, the data subject must also be informed before their data is used for any additional purpose beyond what was originally disclosed. Where this information is provided,

[t]he controller shall take appropriate measures to provide any information ... [or] communication ... relating to processing to the data subject in a concise, transparent, intelligible and easily accessible form, using clear and plain language ...[107]

In relation to data processing, data subjects also have a right to obtain information about whether their personal data is being processed, and, if so, have a right to access that data and find out the purposes of the processing.[108] Data subjects must also be informed of: the ‘existence of automated decision-making, including profiling’, along with ‘meaningful information about the logic involved’, the significance and the envisaged consequences of such processing;[109] and the period for which personal data will be stored (or, failing that, the criteria to be used to determine that period).[110] Data subjects must also be informed of the existence of the right to: request access to, and rectification or erasure of, personal data; request restriction of processing or to object to processing; data portability; withdraw consent at any time, if the data collection is based on consent; and lodge a complaint.[111]

Providing ‘meaningful information’ about the logic of automated decision-making may require providing access to the algorithm itself.[112] As the ILO has argued in its World Employment and Social Outlook 2021 report, having access to the source code of an algorithm for analysis is necessary to identify and address discrimination.[113] Such access, however, might be limited by trade agreements, trade secrets and ‘proprietary information’.[114] Governments could therefore consider adopting policies that favour open-source technologies, and/or enabling public regulatory agencies or specialised agencies to audit algorithms’ source code.[115] Regulators can therefore play a critical role in achieving algorithmic transparency. For example, in relation to the gig economy, the ACCC can exercise its compulsory information gathering powers under s 155 of the Competition and Consumer Act 2010 (Cth) (‘Competition and Consumer Act’) to compel platforms to provide evidence of how algorithms operate at a point in time.[116]

In the employment context, the EU Proposal for a Directive of the European Parliament and of the Council on Improving Working Conditions in Platform Work (‘Proposal for a Directive on Improving Working Conditions’) would require platforms to inform workers of: the use of automated decision-making ‘that significantly affect[s] those platform workers’ working conditions’, the parameters taken into account, and their relative importance; and the use of automated monitoring systems, and what actions are being monitored.[117] This information would also need to be provided to ‘workers’ representatives and national labour authorities’, if requested.[118] If these requirements were extended to all employers (and all workers), the

provision of such information would go some way to addressing the

information asymmetries between workers and employers in the context of

algorithmic management.

Second, consent has become a critical issue in the context of privacy law and data processing.[119] Under art 6(1) of the GDPR, processing is lawful if one of the following applies: the data subject consents; it is necessary for the performance of a contract where the data subject is a party (or it is a necessary step, at the data subject’s request, prior to entering into a contract); it is necessary for compliance with a legal obligation; it is necessary to protect the ‘vital interests’ of the data subject or of another natural person; it is necessary for a task in the public interest or in the exercise of official authority; or it is necessary for the legitimate interests pursued by the controller or by a third party (except where overridden by the interests or fundamental rights and freedoms of the data subject). Thus, consent is likely to be critical for enabling processing in many contexts, particularly given the right to privacy under art 8 of the ECHR, which will weigh against the pursuit of the controller’s ‘legitimate interests’. The GDPR defines ‘consent’ as

any freely given, specific, informed and unambiguous indication of the data subject’s wishes by which he or she, by a statement or by a clear affirmative action, signifies agreement to the processing of personal data relating to him

or her.[120]

Article 7(4) of the GDPR also says:

When assessing whether consent is freely given, utmost account shall be taken of whether, inter alia, the performance of a contract, including the provision of a service, is conditional on consent to the processing of personal data that is not necessary for the performance of that contract.

Consent can be withdrawn at any time and data subjects must be informed of this.[121] Where consent is withdrawn, data subjects have a right to erasure.[122] While the ability of workers to provide meaningful individual consent in the context of the employment relationship is questionable, this could also encompass collective consent.[123]

A substantive understanding of consent has also emerged under the APP.[124] Under the APP, sensitive information may only be collected with consent, even where the collection is reasonably necessary for one or more of the entity’s functions or activities.[125] However, this requirement does not apply if the collection is: required or authorised by or under law or a court/tribunal order;[126] for a permitted general situation;[127] or for a permitted health situation (such as to provide a health service or for research purposes).[128] Consent is also not required if the entity is an enforcement body and it reasonably believes that ‘the collection of the information is reasonably necessary for, or directly related to, one or more of the entity’s functions or activities’;[129] or the entity is a non-profit organisation, the ‘information relates to the activities of the organisation’ and the ‘information relates solely to the members of the organisation, or to individuals who have regular contact with the organisation in connection with its activities’.[130]

The nature of consent required under the APP was considered in the unfair dismissal case of Lee v Superior Wood Pty Ltd (‘Lee’).[131] In that case, Lee was directed to provide his fingerprint for use in a new workplace security system but refused.[132] The Tribunal held that this was a breach of privacy law:

[T]he direction to Mr Lee to submit to the collection of his fingerprint data, in circumstances where he did not consent to that collection, was not a lawful direction. Moreover we consider that any ‘consent’ that he might have given once told that he faced discipline or dismissal would likely have been vitiated by the threat. It would not have been genuine consent. ... A necessary counterpart to a right to consent to a thing is a right to refuse it. A direction

to a person to give consent does not vest in that person a meaningful right

at all.[133]

Thus, in the employment context, consent obtained via a direction to consent (backed by the threat of disciplinary action or dismissal) will not satisfy the APP. Consent must be genuine. Again, this flags the very real question of whether employees can meaningfully consent (or meaningfully refuse consent) to employer data requests in the workplace.

Third, privacy law places obligations on entities to ensure that data are accurate and up to date. For example, the GDPR provides data subjects with a right to rectification:

The data subject shall have the right to obtain from the controller without undue delay the rectification of inaccurate personal data concerning him or her. Taking into account the purposes of the processing, the data subject shall have the right to have incomplete personal data completed, including by means of providing a supplementary statement.[134]

Where the accuracy of data is contested, data subjects have a right to obtain restrictions on data processing for such a period as enables the accuracy of data to be verified.[135]

In Australia, an organisation ‘must take such steps (if any) as are reasonable in the circumstances’ to ensure personal information it collects, uses or discloses is ‘accurate, up-to-date, complete and relevant’,[136] and to correct the information to ensure it is ‘accurate, up-to-date, complete, relevant and not misleading’.[137] This is paired, too, with a (limited) right to access personal information held by the entity.[138]

Fourth, privacy law can place substantive limits on when data can be collected. In Australia, for example, organisations ‘must not collect personal information (other than sensitive information) unless the information is reasonably necessary for one or more of the entity’s functions or activities’.[139] The EU Proposal for a Directive on Improving Working Conditions goes further, and would require that platforms ‘not process any personal

data concerning platform workers that are not intrinsically connected to

and strictly necessary for the performance of the contract’, prohibiting

the processing of data relating to emotional and psychological states,

health, private conversations, and prohibiting the collection of data from

non-work time.[140]

Fifth, any automated decision or outcome should be overseen by a human decision-maker — an approach described as ‘human-in-command’.[141] The GDPR provides, for example, that

[t]he data subject shall have the right not to be subject to a decision based solely on automated processing, including profiling, which produces legal effects concerning him or her or similarly significantly affects him or her.[142]

However, automated decision-making is allowed where it is: ‘necessary for entering into, or performance of, a contract between the data subject and a data controller’; ‘authorised by Union or Member State law’; or ‘based on the data subject’s explicit consent’.[143] Even in these cases, though, EU law, member state law or the data controller itself must implement ‘suitable measures to safeguard the data subject’s rights and freedoms and legitimate interests’.[144] For data controllers, this includes (at a minimum) a right for data subjects to: obtain human intervention; express their point of view; and contest the decision.[145]

These limits on automated decision-making are critical for ensuring that mistakes or inaccuracies are corrected (ideally, before being implemented or adopted). They also place accountability with a human decision-maker, which is essential if algorithms and automated processes are not to be used to obfuscate legal responsibility.[146] The effectiveness of the GDPR’s provisions relating to automated processing, though, may well turn on how we interpret ‘legal effects’ and ‘significantly affects’.[147]

Sixth, and finally, the adoption of algorithms or automated processing should follow a process of evaluation, to ensure the technology is being used for a legitimate purpose, is appropriate for achieving that purpose, and includes appropriate safeguards for individual rights.[148] This takes the form, for example, of data protection impact assessments in the EU.[149] It could also, for example, be embedded in collective bargaining and negotiation (see discussion in Part III(D) below).[150] The EU Proposal for a Directive on Improving Working Conditions, for example, would require platforms to evaluate the health and safety risks of automated monitoring and decision-making, assess the adequacy of existing safeguards, and ‘introduce appropriate preventive and protective measures’ to address those risks.[151] Further, it would explicitly extend information and consultation requirements to ‘the introduction of or substantial changes in the use of automated monitoring or decision-making systems’.[152] Again, these proposed measures could beneficially be extended to the workforce more broadly.

While these ideas and principles are progressively being implemented in EU law — including through the GDPR and the revised Council of Europe’s Convention for the Protection of Individuals with Regard to Automatic Processing of Personal Data[153] — De Stefano argues that there is still a need for specific standards, as they relate to work, to make these provisions meaningful in practice.[154] This could be achieved, in part, by adopting the EU Proposal for a Directive on Improving Working Conditions, but it would also require the adoption of measures relating to non-platform work.

More generally, though, privacy law is ultimately a limited tool for addressing the limits of data and algorithms at work. For example, the APP contain an exemption for employee records, so long as the act or practice is directly related to an employee record for a current or former employment relationship.[155] ‘Employee record’ is defined as

a record of personal information relating to the employment of the employee. Examples of personal information relating to the employment of the employee are health information about the employee and personal information about all or any of the following:

(a) the engagement, training, disciplining or resignation of the employee;

(b) the termination of the employment of the employee;

(c) the terms and conditions of employment of the employee;

(d) the employee’s personal and emergency contact details;

(e) the employee’s performance or conduct;

(f) the employee’s hours of employment;

(g) the employee’s salary or wages;

(h) the employee’s membership of a professional or trade association;

(i) the employee’s trade union membership;

(j) the employee’s recreation, long service, sick, personal, maternity, paternity or other leave;

(k) the employee’s taxation, banking or superannuation affairs.[156]

This is broad enough to cover most existing forms of data that might inform algorithmic management. However, in Lee, it was held that the employee records exemption only applies to

records obtained and held by an organisation. A record is not held if it has not yet been created or is not yet in the possession or control of the organisation. The exemption does not apply to a thing that does not exist or to the creation of future records.[157]

Thus, the initial collection of new employee data must still comply with the APP (including, for example, by issuing a privacy collection notice and gaining meaningful consent for the collection of sensitive data).[158] Once data are collected, though, the exemption is enlivened and the APP no longer apply.[159] This is significant, for example, in relation to the need to ensure data are up-to-date and accurate; presumably this obligation does not apply

to employee data that have already been collected. Clearly, then, privacy

law has not been drafted with a view to maintaining standards in the

employment context.

More fundamentally, too, many rights under privacy law are focused on processes and procedures, rather than substantive outcomes.[160] This compares markedly with discrimination law, which is ultimately concerned with disparate outcomes.[161] Further, as Kim emphasises, by focusing on individual rights — to data, transparency, access and data rectification — privacy law tends to ignore forms of group-based disadvantage which are critical to equality law.[162] This is a limitation of both the APP and the GDPR; it means there is a need, then, to look beyond privacy law to secure accountability for discrimination.

Another potential route to ensuring accountability, particularly where multiple actors are performing distinct roles in deploying algorithmic tools, might be through third-party or accessorial liability provisions. Under the Fair Work Act, for example, a person or company ‘involved’ in the contravention of a workplace law (including the taking of ‘adverse action’ on the basis of a protected characteristic) is taken to have contravened the provision.[163] Being ‘involved’ includes: aiding, abetting, counselling or procuring a contravention; inducing the contravention (including through threats or promises); being ‘in any way, by act or omission, directly or indirectly, knowingly concerned in or party to the contravention’; and conspiring with others to effect the contravention.[164]

Third-party or accessorial liability can extend to a business in the company’s supply chain.[165] This legal footing has allowed the Australian Fair Work Ombudsman (‘FWO’) to emphasise supply chain risks in its enforcement activity; indeed, this was one of the FWO’s priorities in 2019–20.[166] Supply chain litigation of this nature is seen as one tool for addressing the fissuring and fragmentation of the workplace and employment structures.[167] While such litigation has typically focused on ‘lead firms’,[168] it could also be deployed to address the role of algorithm developers in enabling or encouraging a breach of workplace laws. Indeed, this is fully consistent with the push to make firms accountable for procurement decisions,[169] including in the area of digital technologies.

This approach, though, requires strategic enforcement of these provisions. Indeed, it may hinge on regulators — like the FWO — taking action in this area. Given the risks of enforcement and the relatively innovative nature of these legal tools, it is unlikely that individual workers will be willing to pursue such a claim or be able to do so effectively.[170] As Hardy and Howe argue, ‘in the absence of any authoritative decision, the scope of these statutory provisions remains indeterminate’.[171] This is likely to deter individual enforcement of these provisions.

Another possible regulatory solution, then, would be to collectively negotiate limits or improvements to the use of algorithmic management at work. While an individual right to challenge automated processes and decisions is important, individual enforcement is unlikely to be sufficient as algorithmic management becomes more prevalent and diffuse.[172] De Stefano therefore sees an important role for collective action in this area:

[C]ollective rights and voices will be crucial. Collective agreements could address the use of digital technology, data collection, and algorithms that direct and discipline the workforce, ensuring transparency, social sustainability, and compliance with these practices with regulation. Collective negotiation would also prove pivotal in implementing the ‘human-in-command’ approach at the workplace. Collective bargaining could also regulate issues such as the ownership of the data collected from workers and go as far as creating bilateral or independent bodies that would own and manage some of the data. All this would also be consistent with collective bargaining’s fundamental function as an enabling right and as a rationalization mechanism for the exercise of employers’ managerial prerogatives, allowing the movement away from a purely unilateral dimension of work governance.[173]

This form of collective negotiation and regulation is specifically envisaged by the GDPR. Article 88(1) says:

Member States may, by law or by collective agreements, provide for more specific rules to ensure the protection of the rights and freedoms in respect of the processing of employees’ personal data in the employment context ...

A further possibility, too, is to use collective voice to redesign new technologies, and the work systems in which they operate, to promote accountability and equality. For Berg, this should be achieved through participatory design, where users (including workers, managers, customers, union representatives, labour authorities and regulators) are included in the design process.[174] This process would be assisted and enabled by existing mechanisms for collective voice; thus, Berg argues that collective labour rights should be extended to all workers.[175] De Stefano similarly sees collective rights as ‘enabling rights’ critical to the enforcement of other workplace rights and for balancing workplace power disparities.[176] As the European Economic and Social Committee has emphasised, this form of dialogue and discussion is also essential for building workers’ trust in AI.[177]

In terms of how this might work in practice, one example comes from an agreement between the Spanish government and the social partners on the labour rights of those working on digital platforms.[178] The agreement expands workers’ rights to information, providing that platforms must inform workers’ legal representatives of any algorithmic formula that determines working conditions.[179] Thus, this right to transparency extends and reinforces rights under privacy law in the employment sphere.

A less technically adapted option lies, for example, in the University of Melbourne Enterprise Agreement 2018, which says:

[A]n Employee may raise a grievance ... on matters pertaining to procedural fairness of a performance assessment process where there is a basis to consider that ... assessment of performance was not consistent or transparent against ... the expectations and objectives of the position ...[180]

While not directly pertaining to digital technologies, data or algorithms as such, this clause raises the possibility that the non-transparent use of data or algorithms in performance processes might be the subject of an employee grievance. This offers one way, then, of integrating the principles of privacy law into the employment relationship and of challenging employer use of non-transparent new technologies.

That said, collectively bargained solutions are only likely to be possible in the most protected workplaces, including those with a union presence, enterprise agreement or strong employee advocacy.[181] Achieving collective solutions will be more difficult in workplaces with no union presence, where employees are dispersed, engaged as independent contractors or engaged through labour hire arrangements.[182]

Thus, this emphasises the need for all workers — regardless of employment status — to have the right to collectively organise. In Australia, this has been advanced, in part, by the ACCC’s grant of a class exception under the Competition and Consumer Act, allowing groups of small businesses (and the self-employed) to undertake some forms of collective bargaining without contravening competition law.[183] This overcomes, to some extent, the limited scope of the Fair Work Act, which extends bargaining and strike protection to ‘employees’ only (not the self-employed).[184] As McCrystal and Hardy argue, however, the ACCC’s exception is focused ‘on the removal of impediments to collective bargaining, rather than being about the provision of structural supports’.[185] This may limit its practical capacity to support effective bargaining and collective action for those who are not ‘employees’.

From this broad discussion of privacy and data protection law, third-party and accessorial liability, and collective action, we can discern six critical lessons for equality law. First, there are fundamental ideas and principles that equality law should advance to better address the risks of algorithms and algorithmic management at work. These are transparency, consent, accuracy, the ‘human-in-command’ approach and meaningful evaluation. Second, collective action and voice can and should play an important role in developing, implementing and scrutinising these ideas in the organisational context. Third, statutory agencies can play a critical role in advancing these principles, either through strategic enforcement and litigation (in the case of the FWO) or through compulsory information gathering (as by the ACCC). Fourth, there are existing regulatory tools (as under the Fair Work Act) that enable accessorial liability for workplace infringements, which could inform our development of accountability standards for the different parties and roles involved in algorithm development and implementation. Fifth, some of the ideas and principles that are critical to privacy law — such as consent — do not readily apply to the employment context. There are very real questions about whether an employee can meaningfully consent to data collection and processing if they are subject to the threat of discipline or dismissal for

noncompliance. This means, then, that we must be particularly attuned to the power differentials that pervade work, and how this might demand different regulatory tools in the employment context. Finally, this discussion has illustrated the need for equality law to respond proactively to the risks

of algorithms and algorithmic management at work; we cannot rely on

other areas of law to address the substantive discriminatory impacts of

these technologies.

So what, then, for equality law? In the US context, Kim argues that ‘a mechanical application of existing disparate impact doctrine will fail to meet the particular risks that workforce analytics pose’.[186] Equality laws in other jurisdictions, though, are arguably more adaptable to the risks of algorithmic discrimination. In the EU and United Kingdom (‘UK’) context, for example, Adams-Prassl and others have argued that algorithmic discrimination could be seen as a form of direct discrimination.[187] The Equality Act 2010 (UK) (‘Equality Act UK’) defines direct discrimination as where, ‘because of a protected characteristic, A treats B less favourably than A treats or would treat others’.[188] Adams-Prassl and others argue that this could be satisfied, for example, if algorithms directly rely on protected characteristics to inform decision-making; or use criterion that act as proxies for protected characteristics (that is, where the criterion and a protected characteristic are inextricably linked).[189] This sort of ‘proxy’ discrimination could be purposefully coded into an algorithm, or ‘learnt’ by the algorithm through analysing data.[190] The issue, of course, is how ‘perfectly’ the proxy must align with the protected characteristic to be regarded as direct (rather than indirect) discrimination.[191]

Adams-Prassl and others further suggest that algorithms may be directly discriminatory if protected characteristics form part of the algorithm’s ‘subjective mental processes’ — essentially, if algorithms manifest automated forms of unconscious human bias.[192] The authors use the example of Amazon’s recruitment tool, which graded applications with masculine terms more highly.[193] If algorithms learn to mimic or replicate the bias of past human decision-makers — which is highly likely when the training data set consists of past successful job applications — then ‘[t]he legal position cannot be any different because unfavourable treatment is meted out by an algorithm, rather than a human’.[194]

It is unlikely that this analysis of EU and UK law is readily transferrable to the Australian context. First, Australian discrimination laws define ‘direct discrimination’ differently, depending on the jurisdiction and protected ground, and Australia’s federal system makes analysis more complex, with discrimination regulated through discrimination and industrial law at state, territory and federal levels.[195] While ‘proxy’-style arguments (as put forward by Adams-Prassl and others) might be tenable in some jurisdictions, they will flounder in others.

Sheard argues that, in some cases, proxy-based discrimination may

be captured by the ‘characteristics extension’ in direct discrimination law.[196] For example, the Age Discrimination Act 2004 (Cth) (‘Age Discrimination Act’) prohibits discrimination on the basis of age, as well as characteristics that appertain generally to age, or are generally imputed to age.[197]

Sheard argues, though, that proxy characteristics — like the masculine résumé terms in the Amazon recruitment example — are typically too far removed from a protected characteristic to fall within the

characteristics extension.[198] That is, proxy characteristics are unlikely to be

regarded as appertaining ‘generally’ or being ‘generally’ imputed to a

particular characteristic.

A further issue for ‘proxy’-style arguments arises from the comparator requirement in federal discrimination law. For example, the Age Discrimination Act defines direct discrimination as where

the discriminator treats or proposes to treat the aggrieved person

less favourably than, in circumstances that are the same or are not

materially different, the discriminator treats or would treat a person

of a different age; and the discriminator does so because of [age].[199]

The requirement that treatment be ‘in circumstances that are the

same or are not materially different’ (the comparator requirement) has

proven to be a significant barrier to establishing claims of direct discrimination in federal discrimination law.[200] Further, it likely

means that Australian courts (at least federal courts) will be

unsympathetic to ‘proxy’-style arguments in direct discrimination cases.

In Purvis v Department of Education and Training (NSW) (‘Purvis’), for example, the High Court accepted the argument that the claimant

was excluded from school because of his violent behaviour, not his disability.[201] Their Honours also accepted that the relevant comparator should be a student who exhibited the same violent behaviour, but without the claimant’s disability.[202] This was despite the fact that the claimant’s behaviour was indistinguishable from, and caused by, his disability.[203]

Smith describes this as ‘the separation of a protected trait and a manifestation of the trait’.[204]

If the High Court’s approach to the comparator requirement precluded a finding of discrimination in Purvis, it is highly unlikely that the High Court (or other courts, which have largely followed the High Court’s lead on this issue) will accept ‘proxy’-style arguments in other cases, where the link between a protected characteristic and treatment is even less clear-cut.[205] Indeed, Smith argues that — following Purvis — while the blatant use of protected characteristics is not allowed under Australian discrimination law, stereotypes of particular groups might still inform decision-making, so long as those stereotypes or statistical averages actually apply to the claimant.[206] This is likely to pose a significant barrier to ‘proxy’-style arguments: in the Amazon recruitment tool example, the use of ‘masculine’ terms to filter or grade applications would not be seen as a form of direct discrimination, so long as that standard was applied consistently. Smith therefore argues that Purvis reflects a focus on formal (rather than substantive) equality in direct discrimination cases in Australia.[207] This creates pressure on claimants to comply with established standards and rules in the workplace,[208] which could include, for example, standards and rules set through algorithmic assessments. In Australian federal discrimination law, then, attempts

to challenge (algorithmic) standards and rules are likely to require

resort to indirect discrimination law, unless (algorithmic) standards are

applied inconsistently.[209]

There may be greater potential to challenge algorithmic tools and their discriminatory impacts via direct discrimination claims in some states or territories. For example, the Australian Capital Territory (‘ACT’) and Victoria define direct discrimination as requiring unfavourable treatment, rather than less favourable treatment.[210] This means there is arguably no comparator requirement in the ACT or Victoria.[211] Further, discrimination law in the Northern Territory (‘NT’) has arguably removed the distinction between direct and indirect discrimination: discrimination includes ‘any distinction, restriction, exclusion or preference made on the basis of an attribute that has the effect of nullifying or impairing equality of opportunity’.[212] Thus, any consideration of how overseas arguments might apply in the Australian discrimination law context requires a nuanced appreciation of the diversity of statutory frameworks across federal, state and territory jurisdictions.

A second challenge in attempting to apply EU/UK arguments to the Australian context relates to the matter of proof. Adams-Prassl and others’ argument hinges — in its practical application — on the presence of the reverse burden of proof in EU and UK discrimination law;[213] once a claimant establishes a prima facie case of direct discrimination, the burden shifts to the respondent to provide a non-discriminatory explanation for their actions.[214] This reverse burden is typically not present in Australian direct discrimination law, though it does exist (at least to some extent) under the Fair Work Act adverse action provisions[215] and in the ACT.[216]

Indeed, even with a reverse burden of proof in the EU/UK context, Adams-Prassl and others acknowledge that difficulties of proof may arise due to ‘technical complexity and algorithmic opacity’.[217] Adams-Prassl and others argue that, ‘at least in principle’, it should be easier to identify the factors leading to bias in the context of an algorithm than in the context of a human decision-maker;[218] algorithms are arguably more honest than humans. That said, the push for explicable and explainable AI reveals that AI algorithms (like humans) are rarely forthcoming about the reasons for their decisions, unless (unlike humans) they are explicitly programmed to be transparent.[219] While algorithmic outputs might be reproduceable (that is, if you put

the same inputs in, you should be able to reproduce the original output), this does not mean that algorithmic outputs — and the reasons for those

outputs — are explicable. Adams-Prassl and others’ optimism about the traceability of algorithmic decisions is likely confined to a subset of explicable algorithmic tools (not algorithms more generally). Further, concerns have been raised that it is possible to create deceptive ML algorithms that appear ‘fair’ and explicable, yet consider both ethically acceptable and unacceptable criteria (‘fairwashing’).[220] This likely poses significant problems for establishing causation in cases of direct discrimination; these difficulties of proof are likely to be catastrophic in Australian jurisdictions without a reverse burden of proof.

As Adams-Prassl and others note, too, ‘biased human decision-makers can engage in an ex post facto rationalisation of decisions’.[221] This equally applies to (human) decisions informed by algorithmic tools: decision-makers might later deny that the (discriminatory) algorithm informed their decision-making. This reflects the emphasis on the decision-maker’s state of mind when making the decision in federal industrial law in Australia.[222] Overall, then, while Adams-Prassl and others seem confident that direct discrimination might offer a route for challenging algorithmic discrimination in the EU and UK, the evidentiary and ‘proxy’-style barriers to pursuing such a claim in the Australian context appear highly problematic.

Sheard also raises concerns that decisions made by an algorithm may not meet the requirement ‘that a “person” engage in the [directly] discriminatory treatment’,[223] particularly in cases where an employer is not aware of the algorithmic bias or discriminatory treatment.[224] The Equality Act UK also specifies that direct discrimination is effected by a ‘person’.[225] For Adams-Prassl and others, though, this is no barrier to pursuing a claim of direct discrimination. The authors simply assert that

[a]n inherently discriminatory criterion cannot be any less discriminatory merely because it is applied by a computer system, rather than by a human through a paper process. ... Given that intentionality is irrelevant when establishing direct discrimination, the outcome should be no different if the algorithm, rather than the human trainer, created the indissociable proxy. The discrimination stems from the application of the criterion, not from the mental processes of the decision-maker.[226]

Sheard is more sceptical, and therefore recommends legislative reform to include an express statutory rule of attribution, like that in s 495A of the Migration Act 1958 (Cth), so that algorithmic decisions are taken to be made by the employer in control of that system.[227] That said, the Equal Opportunity Act 2010 (Vic) (‘Equal Opportunity Act Vic’), for example, includes a broad definition of ‘person’ as including unincorporated associations and, for natural persons, those of any age.[228] A purposive interpretation would not confine this provision to natural persons. It remains to be seen how courts respond to these challenging interpretive questions.

Instead, then, we might consider whether a claim of indirect discrimination might be more successful. The Equal Opportunity Act Vic, for example, defines indirect discrimination as where

a person imposes, or proposes to impose, a requirement, condition or

practice —

(a) that has, or is likely to have, the effect of disadvantaging persons with an attribute; and

(b) that is not reasonable.[229]

The Equal Opportunity Act Vic also notes that it is ‘irrelevant whether or not that person is aware of the discrimination’[230] and that motive is also irrelevant.[231] On the surface, this appears to be broad enough to capture discrimination effected through algorithmic management. For example, using an algorithmic tool to determine recruitment or promotion which disproportionately affects certain groups in practice is likely to be seen as a ‘requirement, condition or practice’.[232] The imposing of the ‘requirement, condition or practice’ avoids the difficulty of determining if an algorithm is a ‘person’, as may be required to establish direct discrimination.[233] Further, the focus on ‘effects’, not causation or motive, is likely to avoid legal issues arising from the use of predictive grounds that are not protected characteristics (that is, ‘proxy’-style issues).[234]

That said, indirect discrimination tends not to be a preferred route for claims in Australia,[235] in part because of the complexity and technicality of the legal provisions; and given indirect discrimination (unlike direct discrimination) can generally be justified in some way. Indeed, in a survey of Australian age discrimination law, I found that only 17 of 108 cases raised questions of indirect discrimination; and only one of 12 successful cases in the sample related to indirect age discrimination.[236] In that sample, claimants variously struggled to establish relevant groups for comparison, their own inability to comply with the requirement, or to establish that the requirement was unreasonable.[237]

This study flags some of the problems that might be encountered in using indirect discrimination law to challenge algorithmic tools. Again, as in cases of direct discrimination, there may be evidentiary difficulties in establishing relevant disadvantage; issues of proof are again exacerbated by the

lack of transparency in algorithmic decision-making. Further, establishing

indirect discrimination may require claimants to produce statistical evidence

to show disadvantage;[238] this may prove expensive and practically

problematic for claimants to produce (due to information asymmetries

between claimants and respondents) and difficult for courts to consider or

analyse meaningfully.[239]

Further, many claims of indirect discrimination are likely to hinge on what is ‘reasonable’ (a defence that does not apply to claims of direct discrimination).[240] In Victoria, the burden of proving that a requirement, condition or practice is reasonable is on the person who imposes, or proposes to impose, that requirement;[241] that is, typically, the employer. What is ‘reasonable depends on all the relevant circumstances of the case’, including the ‘nature and extent of the disadvantage’ occasioned; whether the ‘disadvantage is proportionate to the result sought’; the ‘cost of any alternative’; the respondent’s financial circumstances; and ‘whether reasonable adjustments or reasonable accommodations could be made’ to reduce the disadvantage.[242]

Overall, then, while Sheard concludes that indirect discrimination could provide redress for biased algorithmic hiring processes, ‘this will require judicial understanding of complex socio-technical algorithmic systems and engagement with difficult questions of public policy’.[243] Given the sheer absence of case law to date, it is difficult to predict how this will play out in practice. The success of these provisions in addressing algorithmic discrimination will hinge upon how they are interpreted and applied; without a sympathetic, purposive interpretation, claims of indirect discrimination may be unlikely to succeed.[244] Sheard therefore suggests that there is a need to re-craft discrimination law to better address the risks posed by algorithmic discrimination, including through issuing guidelines or standards to assist with interpreting and applying indirect discrimination provisions.[245]

More fundamentally, too, we must acknowledge that the enforcement of discrimination law — whether relating to direct or indirect discrimination — is fraught in practice, due to extensive reliance on individual enforcement mechanisms, the use of confidential conciliation to resolve disputes[246] and limited individual willingness to pursue a complaint. The reasons for this are complex and systemic, and have been addressed by detailed empirical work elsewhere.[247] Algorithmic discrimination likely exacerbates these problems of individual enforcement, given it is an area characterised by a lack of transparency, over-reliance on indirect discrimination as a legal tool and high dependence on statistical data.[248] The use of algorithmic tools in recruitment is likely to be particularly difficult to challenge via individual enforcement mechanisms, given applicants are rarely informed that such tools are in use and claims relating to recruitment rarely proceed to court or tribunal.[249] In sum, then, it is likely futile to rely on individual enforcement of discrimination law to address algorithmic discrimination at work, at least as the law is currently framed.

Instead, then, we need to think about how equality law might be reconfigured to move beyond individual enforcement. Positive equality

duties — which shift the responsibility for advancing equality to organisations, not individuals — have significant potential here.[250] Positive duties are well-established features of equality law in the UK, though their scope is limited to public authorities and those exercising public functions,[251] and their enforcement via judicial review is fraught.[252] The adoption of positive equality duties in Australian discrimination law has been far more limited, but has advanced significantly since 2022. Prior to 2022, arguably only the Equal Opportunity Act Vic imposed a positive duty on employers.[253] In 2022 and 2023, the Sex Discrimination Act 1984 (Cth) (‘Sex Discrimination Act’),[254] Discrimination Act 1991 (ACT)[255] and Anti-Discrimination Act 1992 (NT)[256] were amended to impose positive duties on employers.

The Victorian duty — which has been emulated in the NT, ACT and federally in the Sex Discrimination Act — requires that those with a duty not to engage in discrimination ‘take reasonable and proportionate measures to eliminate that discrimination, sexual harassment or victimisation as far as possible’.[257] Whether a measure is reasonable and proportionate depends on the size, nature, circumstances, resources and priorities of the business; and the practicability and cost of the measures.[258] Enforcement of the duty depends on the Victorian Equal Opportunity and Human Rights Commission conducting an investigation.[259] However, only one investigation has been conducted to date.[260] Thus, while the Victorian duty is arguably broad enough to cover all forms of algorithmic discrimination, its impact in practice has been limited by a lack of enforcement.[261]

Equality law scholars have therefore devoted considerable attention to considering how positive duties might be expanded and adapted to the Australian context.[262] To address algorithmic discrimination specifically, I have argued that a positive duty should be imposed on online platforms to ‘address discrimination or equality issues, including through the analysis and publication of data’.[263] Given the growing use of data and automated processing at work, beyond online platforms, this duty should also be directed to achieving equality in the use of data and AI more broadly.[264] Indeed, positive equality duties could be a critical tool for achieving transparency in automated processes.[265] This approach also speaks to Burdon and Harpur’s argument that the processes effected through information privacy law could be used to address the structural discrimination created by algorithmic management and data processing.[266]

Thus, we can use the principles and thematic ideas originating from privacy law (articulated in Part III(A) and summarised in Part III(E)), as well as equality law scholarship, to help inform the structure and processes of positive duties for addressing algorithmic discrimination at work. Drawing on privacy and data protection law, our attention in developing and adapting positive duties should be on advancing transparency, consent, accuracy, substantive limits on the collection and use of data, human decision-making and processes of evaluation. Building on equality law scholarship, our focus in developing positive duties should be on achieving targeted transparency, an action cycle of engagement to support enforcement — including via consultation[267] — as well as substantive and procedural change,[268] to move beyond a ‘box-ticking’ or compliance approach to positive duties.[269]

Drawing on these principles, then, an expanded positive duty, directed to employers, could include requirements to:

• give proper consideration and take proportionate action to eliminate discrimination and advance equality of opportunity;[270]

• collect, analyse and publicise data on the protected characteristics of those in the workplace;[271]

• report on what data is being collected, for what purpose, and how it is being processed and used, and data quality control measures adopted;

• report on what algorithms or automated systems are being adopted, including:

• how the algorithm or automated system operates, and for what purpose;

• the training and testing and organisational data being deployed, data quality measures in place, and how recently data were reviewed or renewed;

• how the system operates in the context of the specific workplace;[272]

• the role of human oversight in the process; and

• outcomes of algorithms or automated systems across the workforce, including across protected characteristics;

• adopt policies to demonstrate what is being done to address and eliminate discrimination; and

• consult and engage with workers and worker representatives in the collection of data, and adoption and use of algorithms or automated systems.